The Asus ROG Swift PG27UQ G-SYNC HDR Monitor Review: Gaming With All The Bells and Whistles

by Nate Oh on October 2, 2018 10:00 AM EST- Posted in

- Monitors

- Displays

- Asus

- NVIDIA

- G-Sync

- PG27UQ

- ROG Swift PG27UQ

- G-Sync HDR

Closing Thoughts

Bringing this review to a close, the ROG Swift PG27UQ has some subtleties as it is just as much a ‘G-Sync HDR’ monitor as it is an ROG Swift 4Kp144 HDR monitor. In terms of panel quality and color reproduction, the PG27UQ is excellent by our tests. As a whole, the monitor comes with some slight design compromises: design bulkiness, active cooling, limited connectivity. However, those aspects aren’t severe enough to be dealbreakers except in very specific scenarios, such as for silent PC setups. Given the pricing and capabilities, the PG27UQ is destined to be paired with the highest end graphics cards; for a 4K 144Hz target, multi-GPU with SLI is the only – and pricy – solution for more intensive games.

And on that note, therein lies the main nuance with the PG27UQ. The $2000 price point is firmly in the ultra-high-end based on the specific combination of functionalities that the display offers: 4K, 144Hz, G-Sync, and DisplayHDR 1000-level HDR.

For ‘price is no object’ types, this is hardly a concern if the ROG Swift PG27UQ can hit all those well – and it does. But if price is at least somewhat of a consideration – and for the vast majority, it still is – then not using all those features simultaneously means not utilizing the full value of the monitor, and at $2000 this is already including an existing premium. The use-cases where all those features would be used simultaneously, that is, HDR games, are somewhat limited due to the nature of HDR support in PC games, as well as the horsepower of graphics cards currently on the market.

The graphics hardware landscape brings us to the other idea behind getting a monitor of this caliber: futureproofing. At this time, even the GeForce RTX 2080 Ti is not capable of reaching much beyond 80fps or so, and with NVIDIA stepping back from SLI, especially with 2+ way configurations, multi-GPU options are somewhat unpredictable and require more user-configuration. This could be particularly problematic depending on the nature of the HDR with 4:2:2 chroma subsampling performance hit for Pascal cards. Though this could go both ways, as some gamers expect minimal user configuration for products at the upper end of ‘premium’.

On the face of it, this is the type of monitor that demands ‘next-generation graphics’, and fortunately we have the benefit of NVIDIA’s announcement – and now launch – of Turing and GeForce RTX GPUs. In looking to that next generation, G-Sync HDR monitors are put in an awkward position. We still don’t know the extent of performance on Turing hybrid rendering with real-time ray tracing effects, but that feature is clearly the primary focus, if the branding ‘GeForce RTX’ wasn’t already clear enough. For traditional rendering in games (i.e. ‘out-of-the-box’ performance in most games), for 4K performance we saw the RTX 2080 Ti as 32% faster than the GTX 1080 Ti, reference-to-reference, and the RTX 2080 as around 8% faster than the GTX 1080 Ti and 35% faster than the GTX 1080. In the mix is the premium pricing of the GeForce RTX 2080 Ti, 2080, and 2070, of which only the 2080 Ti and 2080 support SLI.

Although this is really a topic to revisit after RTX support rolls out in games, Turing and its successors matter if only because this is a forward-looking monitor with G-Sync (and thus using VRR) means using NVIDIA cards. And for modern ultra-high-end gaming monitors, VRR is simply mandatory. Given that Turing’s focus is on new feature sets rather than purely on raw traditional performance over Pascal, then it somewhat plays against the idea of ‘144Hz 4K HDR with variable refresh’ as the near-future 'ultimate gaming experience', presumably in favor of real-time raytracing and the like. So enthusiasts might be faced with a quandary where enabling real-time raytracing effects means forgoing 4K resolution and/or ultra-high refresh rates, and even when for traditional non-raytraced performance, the framerate is still lacking. Again, details won’t become clear until we see the intensity of hybrid rendered game workloads, but this is absolutely something to keep in mind because not only are ultra-high-end gaming monitors and ultra-high-end graphics cards are tied at the hip, but also that the former tends to have longer upgrade/replacement cycles than the latter.

With futureproofing and to a lesser extent early adoption, consumers are paying the premium for features that they will fully utilize at some point, and that the device in question will still be viable until then. But if there is hard divergence from that vision of the future, then some of those features might not be fully utilized for quite some time. For the PG27UQ, it’s clear that the panel quality and HDR capability will keep it viable for quite some time, but right now the rest of the situation is unclear.

Returning to the here-and-now, there are a few general caveats for a prospective buyer. Utilizing HDMI will work with HDR input sources (limited to 60Hz max), but the G-Sync functionality is unused with current generation HDR consoles, which support FreeSync. The monitor is not intended for double-duty as a professional visualization monitor, and for business/productivity purposes the HDR/VRR is not generally useful, and the 4:2:2 chroma subsampling modes may be an issue for clear text reproduction.

On the brightness side, the HDR white and black levels, and the contrast ratios are excellent; with Windows 10 HDR mode these features can be utilized outside of HDR content. The ROG Swift PG27UQ is well-calibrated out-of-the-box, which can’t be understated as most people don’t calibrate monitors. The FALD operates with good uniformity, and color reproduction matches well under both HDR and SDR gamuts.

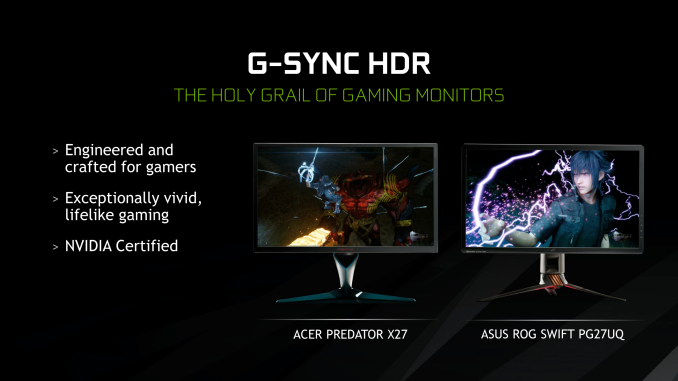

As for the $2000 price point, and the monitor itself, it all comes down to gaming with all the bells and whistles of PC display technology: 4K, 144Hz, G-Sync, HDR10 with FALD and peak 1000 nits brightness. Market-wise, there isn’t a true option that is a step below this, as right now, the PG27UQ and Acer variant are the only choices if gamers are looking for either high refresh rates on a 4K G-Sync monitor, or a G-Sync monitor that supports HDR.

So seeking either combination leaves consumers to have to step up to the G-Sync HDR products. Nonetheless, Acer did recently announce a DisplayHDR 400 variant without quantum dots or FALD, set at $1299 and due to launch in Q4. However, without QD, FALD, or DisplayHDR 1000/600 capabilities, HDR functionality is on the minimal side, and it’s telling that the monitor is specced as a G-Sync monitor rather than G-Sync HDR. As far as we know, there isn’t an upcoming intermediate panel in the vein of a 1440p 144Hz G-Sync HDR product, which would be less able to justify a premium margin.

But because the monitor is focused on HDR gaming, the situation with OS and video game support needs to be noted, though again we should reiterate that this is outside Asus’ control. There is a limited selection of games with HDR support, which doesn’t always equate to HDR10, and of those games not all are developed or designed to utilize HDR’s breadth of brightness and color. Windows 10 support for HDR displays have improved considerably, but is still a work-in-progress. All of this is to say that HDR gaming is baked into the $2000, and purchasing it for primarily high refresh rate 4K gaming effectively increases the premium that the consumer would be paying.

So essentially, gamers will not get the best value of the PG27UQ unless they:

- Regularly play or will play games that support HDR, ideally ones that use HDR very well

- Have or will have the graphics horsepower to go beyond 4K 60fps in those games, and are willing to deal with SLI if necessary to achieve that

- Are willing to deal with maturing HDR support in video games, software, and Windows 10

Again, if price is no object, then these points don't matter from a value perspective. And if consumers fit the criteria, then the PG27UQ deserves serious consideration, because presently there is no other class of monitor that can provide the gaming experience that G-Sync HDR monitors like the ROG Swift PG27UQ can. Asus's monitor packs in every bell and whistle imaginable on a PC gaming monitor, and the end result is that, outside of Acer's twin monitor, the PG27UQ is unparalleled with respect to its feature set, and is among the best monitors out there in terms of image quality.

But if price is still a factor – as playing on the bleeding edge of technology so often is – consumers will have to keep in mind that they might be paying a premium for features they may not regularly use, will use much later in the future than anticipated, or will cost more than expected to use (i.e. costs of dual RTX 2080 Ti's). In the case of GeForce RTX cards, you might end up in a waiting situation for titles to release with HDR and/or RTX support, whereupon the card would still not push the PG27UQ's capabilties to the max.

On that note, the situation relies a lot on media consumption habits, not only in terms of HDR games or HDR video content but also in terms of console usage, preference for indie over AA/AAA games, and preference over older versus newer titles. If $2000 is an affordable amount, that budget could encompass two quality displays combined that may better suit individual use-case scenarios, for example, Asus' $600 to $700 PG279Q (1440p 165Hz G-Sync IPS 27-in) monitor paired with a $1300 4K HDR 27-in professional monitor with peak 1000 nit luminance. Or instead of a professional HDR monitor, an entry or mid-level 4K HDR TV in the $550 to $1000 range.

Wrapping things up, if it sounds like this is equal parts a conclusion of G-Sync HDR as much as it is of the ROG Swift PG27UQ, it is because it is. G-Sync HDR currently exists as the Asus and Acer 27-in models, and those G-Sync HDR capabilities are what is driving the price; NVIDIA’s G-Sync HDR is not just context or a single feature, it is intrinsically intertwined with the PG27UQ.

Though this is not to say the ROG Swift PG27UQ is flawed. It’s not the perfect package, but the panel provides combined qualities that no other gaming monitor, excluding the Acer variant, can offer. As a consumer-focused site we can never ignore the importance of price in evaluating a product, but just putting that aside for the briefest of moments, it really is an awesome monitor that is well beyond what any other monitor can deliver today. It just costs more than what most gamers will ever consider paying for a monitor, and the nuances of the monitor, G-Sync HDR, and HDR gaming means that $2000 might be more than expected for how you use it.

Ultimately the PG27UQ is the first of many G-Sync HDR monitors. And as the technology matures, hopefully we'll see these monitors further improve and for the price to drop. However for the near future, the schedule slip of the 27-inch G-Sync HDR models doesn’t bode well for the indeterminate timeframe of the 35-in ultrawides and 65-in BFGDs. So if you want the best right now – and what's very likely to be the best 27-inch monitor for at least the next year or two to come – this is it.

91 Comments

View All Comments

Flunk - Tuesday, October 2, 2018 - link

I'd really like one of these, but I can't really justify $2000 because I know that in 6-months to a year competition will arrive that severely undercuts this price.imaheadcase - Tuesday, October 2, 2018 - link

That's just technology in general. But keep a eye out, around that time this monitor is coming out with a revision that will remove the "gaming" features" but still maintain refresh rate and size.edzieba - Tuesday, October 2, 2018 - link

The big omission to watch out for is the FALD backlight. Without that, HDR cannot be achieved outside of an OLED panel (and even then OLED cannot yet meet the peak luminance levels). You;re going to see a lot of monitors that are effectively SDR panels with the brightness turned up, and sold as 'HDR'. If you're old enough to remember when HDTV was rolling uout, remember the wave of budget 'HD' TVs that used SD panels but accepted and downsampled HD inputs? Same situation here.Hixbot - Tuesday, October 2, 2018 - link

Pretty sure edgelit displays can hit the higher gamut by using a quantom dot filter.DanNeely - Tuesday, October 2, 2018 - link

quantum dots increase the color gamut, HDR is about increasing the luminescence range on screen at any time. Edge lit displays only have a handful of dimming zones at most (no way to get more when your control consists of only 1 configurable value per row/column). You need back lighting where each small chunk of the screen can be controlled independently to get anything approaching a decent result. Per pixel is best, but only doable with OLED or jumbotron size displays. (MicroLED - we can barely make normal LEDs small enough for this scale.) OTOH if costs can be brought down microLED does have the potential to power a FALD backlight with an order of magnitude or more more dimming zones than current models LCD can do; enough to largely make halo effects around bright objects a negligible issue.Lolimaster - Tuesday, October 2, 2018 - link

There is also miniled that will replace regular led for the backlight.Microled = OLED competition

Miniled up to 50,000zones (cheap "premium phones" will come with 48zones).

crimsonson - Tuesday, October 2, 2018 - link

I think you are exaggerating a bit. HDR is just a transform function. There are several standards that say what the peak luminance should be to considered HDR10 or Dolby Vision etc. But that itself is misleading.Define " (and even then OLED cannot yet meet the peak luminance levels)"

Because OLED can def reach 600+ nits, which is one of the standards for HDR being proposed.

edzieba - Tuesday, October 2, 2018 - link

"HDR is just a transform function"Just A transform function? [Laughs in Hybrid Log Gamma],

Joking aside, HDR is also a set of minimum requirements. Claiming panels that do not even come close to meeting those requirements are also HDR is akin to claiming that 720x468 is HD, because "it's just a resolution". The requirements range far beyond just peak luminance levels, which is why merely slapping a big-ass backlight to a panel and claiming it is 'HDR' is nonsense.

crimsonson - Wednesday, October 3, 2018 - link

"Just A transform function? [Laughs in Hybrid Log Gamma],"

And HLG is again just a standard of how to handle HDR and SDR. It is not required or needed to display HDR images.

"HDR is also a set of minimum requirements"

No, there are STANDARDS that attempts to address HDR features across products and in video production. But in itself does not mean violating those standards equate to a non-HDR image. Dolby Vision, for example, supports dynamic metadata. HDR10 does not. Does that make HDR10 NOT HDR?

Eventually, the market and the industry to congregate behind 1 or 2 SET of standards (since it is not only about 1 number or feature). But we are not there yet. Far from it.

Since you like referencing these standards, you do know that Vesa has HDR standards as low as 400 and 600 nits right?

And I think you are conflating wide gamut vs Dynamic Range. FALD is not needed to achieve wide gamut.

And using HD to illustrate your points exemplifies you don't understand how standards work in broadcast and manufacturing.

edzieba - Thursday, October 4, 2018 - link

"And HLG is again just a standard of how to handle HDR and SDR. It is not required or needed to display HDR images."The joke was that there are already at least 3 standards of HDR transfer functions, and some (e.g. Dolby Vision) allow for on the fly modification of the transfer function.

"And I think you are conflating wide gamut vs Dynamic Range. FALD is not needed to achieve wide gamut."

Nobody mentioned gamut. High Dynamic Range requires, as the name implies, a high dynamic range. LCD panels cannot achieve that high dynamic range on their own, they need a segmented backlight modulator to do so.

As much as marketers would want you to believe otherwise, a straight LCD panel with an edge-lit backlight is not going to provide HDR.

"And using HD to illustrate your points exemplifies you don't understand how standards work in broadcast and manufacturing."

Remember how "HD ready" was brought in to address exactly the same problem of devices marketing capabilities they did not have? And how it brought complaints about allowing 720p devices to also advertise themselves as "HD Ready"? Is this not analogous to the current situation where HDR is being erroneously applied to panels that cannot achieve it, and how VESA's DisplayHDR has complaints that anything below Display HDR1000 is basically worthless?